New to Rust? Grab our free Rust for Beginners eBook Get it free →

Prompt Engineering vs. Fine-Tuning: The Easiest Explanation

Applications like ChatGPT, Gemini, and Claude are powerful AI tools that can answer questions, write stories, or process data. But how can we get them to do a better job on some tasks? Two popular strategies are prompt engineering and fine-tuning. Though both aim to improve AI, they do it in quite distinct ways.

In this article, we will define what prompt engineering and fine-tuning are, how they operate, and when to apply them. If you are new to AI or simply interested, this guide will cover everything in simple terms.

What is Prompt Engineering?

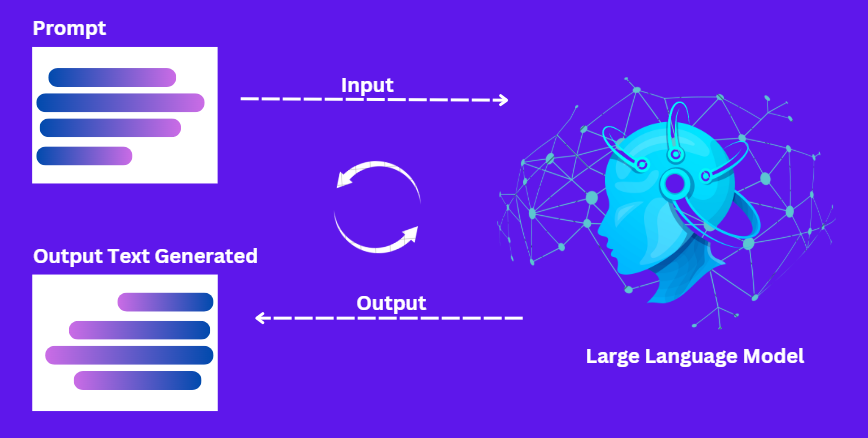

Prompt engineering is the art of creating effective instructions (called “prompts”) to produce excellent output from an AI model. It is like asking a question in such a manner that the AI is able to provide you with the answer you need.

How Does It Work?

Consider it as if you’re addressing a chef. If you instruct them, “Make me something to eat,” they can cook you anything. But if you say, “Make me a vegetarian pizza with extra cheese,” you’ll have what you requested. The same applies to prompt engineering and generating specific, comprehensive prompts to direct the AI response.

Example:

- Weak Prompt: “Write a story.”

Result: The AI writes a generic story. - Engineered Prompt: “Write a 300-word story about a robot who loves gardening. Use a funny tone and include a twist at the end.”

Result: The AI generates a specific, engaging story.

Key Features of Prompt Engineering

- Clear and Specific Inputs: Effective prompt engineering includes giving clear and specific inputs.

- Understanding AI Limitations: AI computers do not understand context like human beings. They require explicit instructions.

- Iterative Process: Typically, the most effective outcome is when you experiment with various prompts until you arrive at the correct one.

When to Use Prompt Engineering?

- Quick Fixes: Adjust outputs with minimal technical effort (e.g., marketing teams making chatbot outputs).

- Low Budget: No expensive training is required.

- General Tasks: Writing content, brainstorming, or just Q&A.

- Experimentation: Try out concepts without permanent attachment.

What is Fine Tuning?

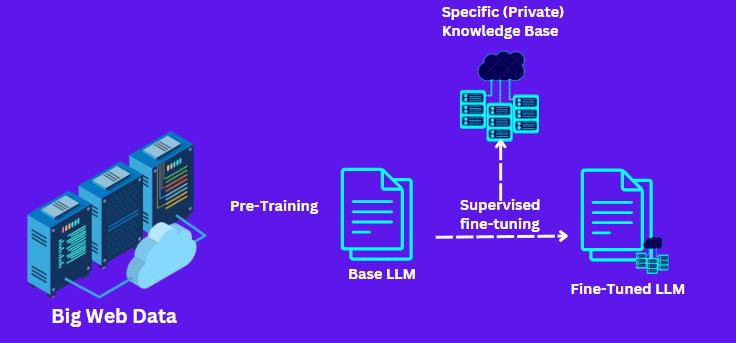

Fine-tuning is when we retrain a pre-existing AI model with new data to enable it to perform a particular task better. It is similar to requesting a cook to prepare a new recipe by allowing them to continue practicing again and again.

How Does It Work?

AI models begin training on a huge amount of broad knowledge, such as books and websites. There is fine-tuning, whereby the model is further trained on smaller, targeted data sets. This fine-tunes the model’s “brain” (neural network) to perform better in a specific area.

Example:

Think of it like teaching a generalist to become a specialist.

A language model, for example, that is trained on a range of writings might not be good at medical diagnosis. By training it on medical texts and information, you are making its abilities more appropriate for use in the medical industry.

Key Features of Fine-Tuning

- Customized Training: Fine-tuning means teaching the AI again with more specific data.

- Computer-Intensive: It typically requires more computer power and is slower than prompt engineering.

- Deep Customization: This procedure alters the way the AI model internally processes, becoming significantly more specialized.

When to Apply Fine-Tuning?

- Specific Job: Medical diagnosis, legal document analysis, or technical support.

- Routine Outputs: Tasks that entail specific vocabulary or layout (e.g., preparing medical reports).

- Proprietary Data: When utilizing internal data (e.g., company emails) to customize the AI. Long-Term Projects: A worthy investment for valuable work.

Prompt Engineering vs. Fine-Tuning: Key Differences

This table provides a quick glance at the key differences, helping you decide which method is best for your needs.

| Feature | Prompt Engineering | Fine-Tuning |

| Definition | Crafting clear, detailed inputs to guide AI responses. | Retraining a pre-trained model on new, task-specific data. |

| Customization Level | Adjusts inputs without altering the model’s parameters. | Changes the internal weights for deep customization. |

| Resource Requirements | Minimal computing power, quick iterations. | High computational cost, requires extensive data and time. |

| Speed and Efficiency | Faster to implement, great for rapid prototyping. | Slower process, demands thorough preparation and testing. |

| Use Cases | Chatbots, content generation, and general Q&A. | Specialized applications like medical diagnosis and legal analysis. |

| Flexibility | Highly flexible, easy to update and refine prompts. | Less flexible once the model is fine-tuned, it requires retraining to change. |

| Risk of Overfitting | Low, as the model’s parameters remain unchanged. | Higher risk if the training dataset is too narrow or small. |

When to Choose Which Technique?

The decision between fine-tuning and prompt engineering is based on several factors:

- Flexibility: In the event that you anticipate that your needs are likely to shift frequently, prompt engineering allows you more flexibility without necessarily retraining the model.

- Project Scope: If you need rapid improvements and a narrow scope, prompt engineering may suffice. For bigger, specialised applications, fine-tuning is generally the better option.

- Availability of Resources: Prompt engineering is less expensive and less resource-hungry. Fine-tuning is extremely computer-intensive and needs a solid dataset.

- Desired Accuracy: For normal tasks, a good prompt can yield good results. But where high accuracy is extremely critical (such as in healthcare or legal cases), fine-tuning offers more customization that can enhance performance.

Conclusion

Prompt engineering and tuning are both central aspects of AI improvement.

- Prompt engineering is about crafting the optimal input to elicit improved responses from the AI. It is fast, nimble, and low-resource.

- Fine-tuning is the process of retraining the model with new data in order to modify its internal settings. It makes it very specific and is optimal for cases where high accuracy and specialization are very crucial.

By understanding the key components, benefits, and drawbacks of each approach, you can decide which one (or even both) to use on your project. If you are creating a chatbot, enhancing content generation, or tailoring an AI system to perform a specific task, both prompt engineering and fine-tuning offer means to enhance AI.

Here’s some more AI-related content you might find interesting:

- How to Use ChatGPT: All Basics Covered

- How to Write the Perfect ChatGPT Prompt: A 2024 Guide

- DeepSeek vs ChatGPT: Which Is the Best AI Chatbot in 2025?